As part of our AT-node project, we regularly search the literature on the use of alternative access interfaces by people with disabilities. This study popped up in our latest search: Improving the academic inclusion of a student with special needs at University Bordeaux by John Kelway and colleagues, published in the ASSETS ’18 Conference Proceedings, October 2018.

The goal of the study was to define an assistive technology intervention to improve access to and participation in university education for a particular student with disabilities. After a needs assessment involving the student, the team focused on computer text entry to enhance written work and spoken communication. I really like the study because the team described a systematic process for their interventions, took real measurements to see what worked and what didn’t, and ended up with a solution that really helped a particular individual.

Meet YN

The student in this case study is YN, a 60 year-old man with severe motor and speech impairment resulting from an accident. He is unable to speak; he has a low tech paper-based alphabet board and points to the letters on the board for basic communication. YN’s arm and finger movements are “very slow and imprecise.” While he can point to the alphabet board letters and type on a computer keyboard, the speed is really too slow to “fulfill the requirements expected of a university student.” Use of a computer mouse is also quite difficult. If computer interaction efficiency could be improved, this could improve YN’s communication and ability to do academic work in a more timely manner.

Candidate solutions

For text entry, the study focused on 3 options:

- Regular Keyboard: this was a baseline condition, using the standard keyboard in the typical way. See the paper’s Figure 3a below for YN’s hand position during typing.

- Keyboard Assistive Technology (AT): this combined several enhancements to try to improve the precision and comfort of using the keyboard. They 3D-printed a keyguard, positioned the keyboard at a more vertical angle, and 3D-printed a sliding armrest to support YN’s forearm during typing. (They arrived at this combination iteratively, after trying different options, but here I’ll just focus on their final solution.) See the paper’s Figure 3b below for the enhanced keyboard setup.

- Eyegaze Typing: this used a Tobii EyeX eyetracker with an on-screen keyboard (the OptiKey open source OSK). The team, including YN, thought this had the best potential of the solutions tried.

Text entry rate measurements

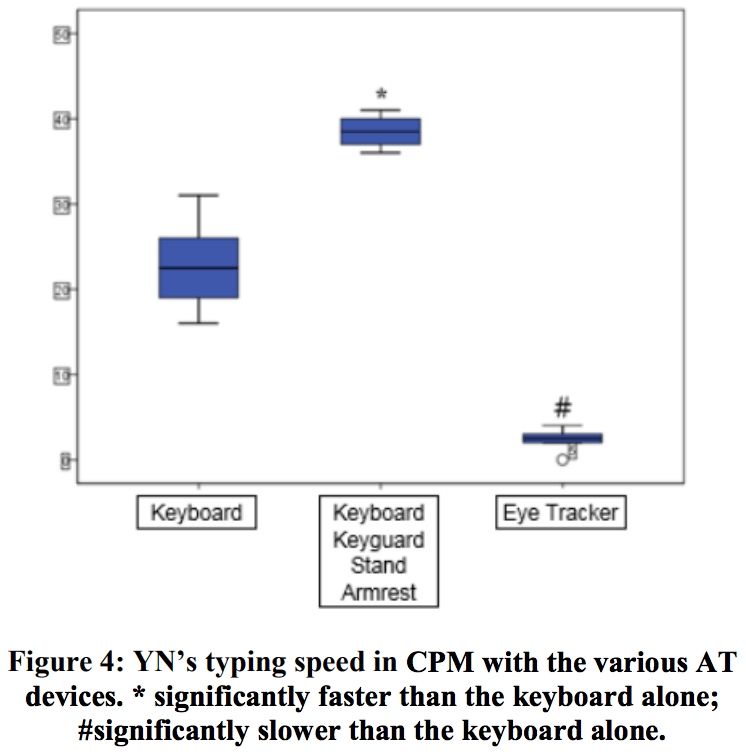

To determine how well each option met YN’s needs, the team measured typing speeds during “several tests.” From the paper’s Figure 4 (see below), I eyeballed the average for each option, using the horizontal line in each shaded box. I then divided by 5 letters per word to convert characters per minute to words per minute (wpm), and put the results in this table:

| Interface | Typing speed (wpm) |

|---|---|

| Regular Keyboard | 4.4 |

| Keyboard AT | 7.6 |

| Eyegaze Typing | 0.6 |

These tests were conducted using 10fastfingers.com. This is very busy with video advertising (although maybe the team had a way of dealing with this), but it does seem to measure typing speed correctly. In particular, it gives credit only for correct characters typed — this is good, since it avoids inflating the typing speed for a method that seems fast but is actually very error-prone.

The results validated the AT enhancements made to the keyboard setup, as they allowed YN to type almost twice as fast as using the standard setup. The team may still want to work on ways to improve that 7.6 wpm, but it is a much more functional speed than 4.4. The authors expressed surprise at the poor performance of eyegaze, noting that it “did not hold as much promise as we had expected.” Yes, it’s possible that eyegaze performance might improve with practice, but a 12-fold improvement (to approach 7.6 wpm) seems very unlikely.

Some thoughts about the results

I love that these authors reported measurements of typing speed with the candidate interfaces. While they don’t specify the exact protocol, or how much prior experience YN got with each option, the results are still a very useful snapshot of what YN was able to do with each typing method. The evidence is quite clear that the keyboard assistive technology provides better typing performance, and that eyegaze (surprisingly) is not a good fit at all for YN. While the relative ranking of the interfaces might have been evident from observation alone, the degree of difference almost certainly wouldn’t be.

Having measurements also provided YN with more specific information to help him weigh his options going forward. Is it really worth using the keyguard, keyboard stand, and armrest? Even if those things can be set up and left in place at a home workstation, there’s still some overhead involved in setup and maintenance. Knowing that the typing speed is 73% faster with that “stuff” provides a more direct way to evaluate the cost-benefit of using the assistive technology. Certainly preference and comfort are also part of that equation.

As a quick note, the study also looked at mouse alternatives with YN, although the only performance metric reported was accuracy, not speed. Notable gains were reported in targeting accuracy with a trackball (at about 90%) as compared to the standard mouse (at about 60%). The touchpad came in 2nd, at about 75% accuracy. Notably, using eyegaze as a mouse was a distant 4th place in accuracy, averaging less than 20% accuracy.

Take-home points

- The right assistive technology can make a significant positive difference.

- With measurements, we can demonstrate how much of a difference, at least in certain domains.

- If you want to take similar measurements, take a look at KPR’s software, including Compass, and our free wizards: Keyboard Wizard, Pointing Wizard, and Scanning Wizard.

What are your experiences with taking similar measurements in your work?